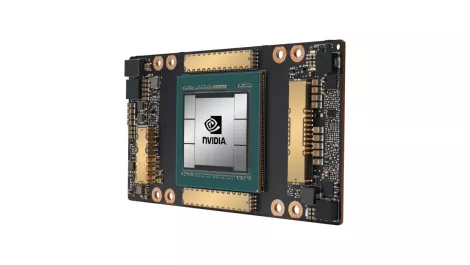

Google Cloud picks up NVIDIA A100 Tensor Core GPUs in alpha

NVIDIA's A100 Tensor Core GPU is now available in alpha on Google Compute Engine, barely a month after it was introduced.

Google Cloud is the first company to offer the new NVIDIA GPU as part of the Accelerator-Optimised VM (A2) instance family.

According to NVIDIA, use cases for A100 in cloud data centers include a broad range of compute-intensive applications, such as AI training and inference, data analytics, scientific computing, genomics, edge video analytics, 5G services, and more.

The NVIDIA A100 GPU is built in NVIDIA's new Ampere architecture, which is designed for the 'age of elastic computing', the company states.

The A100 GPU can boost training and inference computing up to 20 times more than its predecessors, according to NVIDIA.

"Google Cloud customers often look to us to provide the latest hardware and software services to help them drive innovation on AI and scientific computing workloads," comments Google Cloud director of product management, Manish Sainani.

"With our new A2 VM family, we are proud to be the first major cloud provider to market NVIDIA A100 GPUs, just as we were with NVIDIA T4 GPUs. We are excited to see what our customers will do with these new capabilities," Sainani continues.

NVIDIA also adds that the GPU will be able to help organisations scale up scientific computing and AI training, as well as inference applications and even enabling real-time conversational AI.

The A2 VM instances can deliver different levels of performance to efficiently accelerate workloads across CUDA-enabled machine learning training and inference, data analytics, as well as high performance computing.

Furthermore, larger compute workloads are supported by the A100s. Google Compute Engine offers customers the a2-megagpu-16g instance, which comes with 16 A100 GPUs, offering a total of 640GB of GPU memory and 1.3TB of system memory — all connected through NVSwitch with up to 9.6TB/s of aggregate bandwidth.

Smaller workloads can leverage A2 VMs, allowing organisations to match application needs with GPU compute power.

"We look forward to seeing how you use this infrastructure for your compute-intensive projects," say Google's Cloud GPUs product manager Chris Kleban and product manager Bharath Parthasarathy.

Google Cloud states that additional NVIDIA A100 support is coming soon to Google Kubernetes Engine, Cloud AI Platform and other Google Cloud services.

While the first NVIDIA A100 offerings are available on public cloud via Google Cloud's private alpha offering, Google expects to announce public availability later in the year.